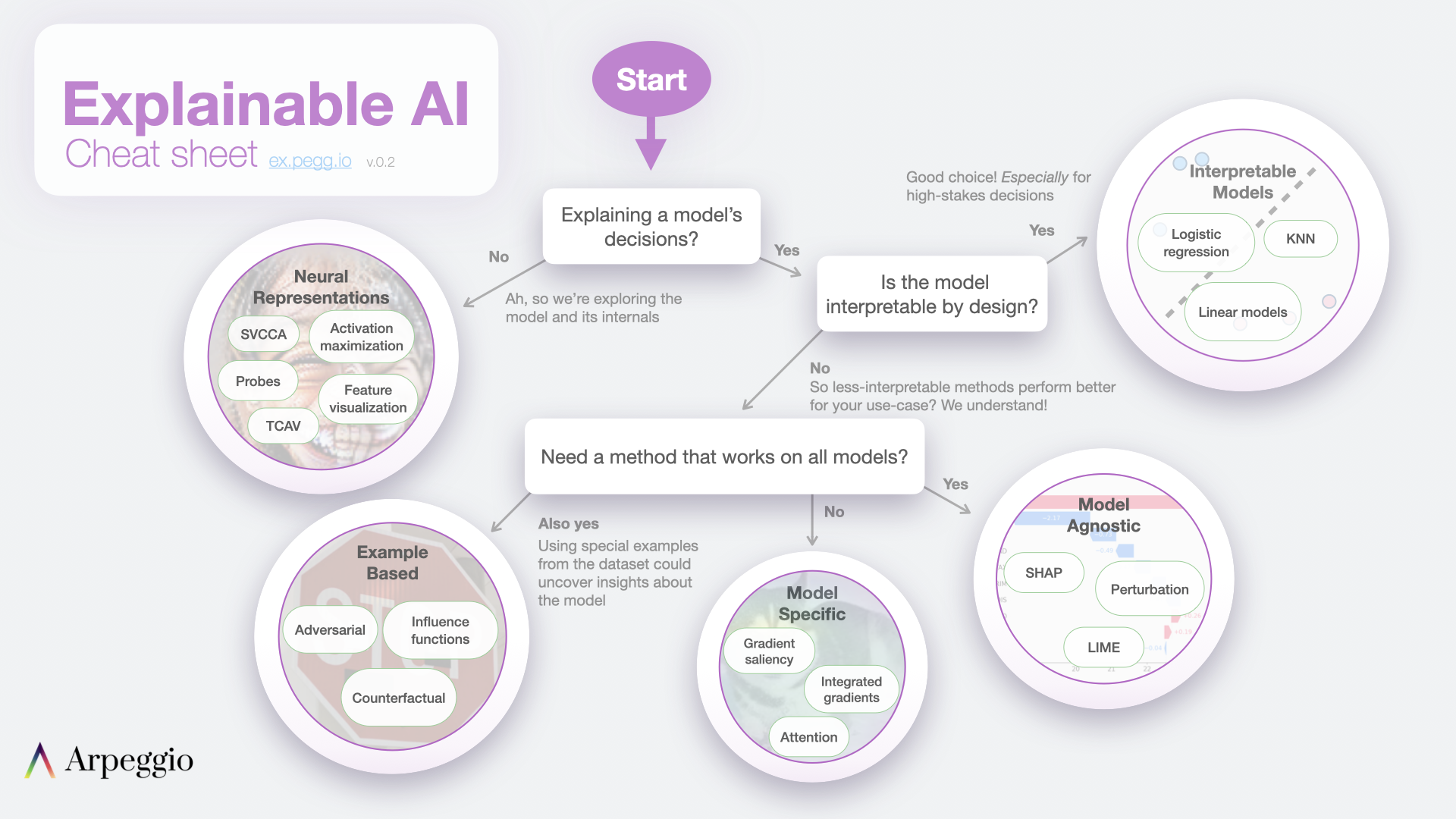

Explainable AI Cheat Sheet

Cheat sheet

Video

A brief overview of the Explainable AI cheat sheet with examples.

Resources

If you're interested to learn more, this is a non-exhaustive list of links and resources. We'll continue to add more resources. Feel free to suggest valuable resources on the Issues page.

General

Interpretable Machine Learning (IML) - Christoph Molnar

Explainability for NLP - Isabelle Augenstein [video]

NLP Highlights: Interpreting NLP Model Predictions - Sameer Singh [audio]

Please Stop Doing "Explainable" ML - Cynthia Rudin [video]

Interpretable Models

StatQuest: K-nearest neighbors, Clearly Explained [video]

IML: Chapter 4 [online book]

Model agnostic methods

IML: Chapter 3 [online book]

SHAP [software]

Model specific methods

Axiomatic Attribution for Deep Networks - Integrated Gradients [paper]

Attention is not Explanation [paper]

Attention is not not Explanation [paper]

A Benchmark for Interpretability Methods in Deep Neural Networks [paper]

Sanity Checks for Saliency Maps [paper]

Example based methods

IML: Chapter 6: Example-Based Explanations [online book]

Neural representation methods

Feature Visualization [article]

Multimodal Neurons in Artificial Neural Networks more recent feature visualization work [article]

SVCCA,

PWCCA [papers]

Interpretability Beyond Feature Attribution: Quantitative Testing with Concept Activation Vectors (TCAV) [paper]

What you can cram into a single $&!#* vector:

Probing sentence embeddings for linguistic properties [paper]

Other methods

Beyond Accuracy: Behavioral Testing of NLP models with CheckList

[paper]

Pitfalls

The Mythos of Model Interpretability

[paper]

Towards falsifiable interpretability research

[paper]